By David F. Salisbury

In the foreseeable future, robots will stick steerable needles in your brain to remove blood clots, and capsule robots will crawl up your colon to reduce the pain of colonoscopies. “Bionic” prosthetic devices will help amputees regain lost mobility, and humanoid robots will help therapists give autistic children the skills to live productive lives. Specialized rescue robots will take an increasingly prominent role in responding to natural disasters and terrorist attacks.

These aren’t futuristic flights of fancy, but some of the research projects at Vanderbilt that are blazing the trail toward increased use of “smart” devices and robotics in surgery and a variety of other applications.

The growing capabilities of these complex electromechanical devices are the product of decades of basic research performed at a number of the nation’s top research universities, including Vanderbilt, and applied by high-tech companies that have incorporated advances in areas such as pattern recognition, motion control, kinematics and software engineering into products ranging from automated vacuum cleaners to bomb-disposal robots.

Vanderbilt University Medical Center has been involved in robotic surgery for more than a decade. It was an early adopter of Intuitive System’s DaVinci surgical robot and performed the first surgical procedure with it in 2003. This early commitment to robotic surgery intensified the partnership between campus engineers and surgeons interested in developing smart medical devices, a partnership that in intervening years has grown to a multimillion-dollar affair, making Vanderbilt one of the primary centers in the country for basic research in medical robotics.

“Today we have a total of 25 investigators with $25 million in research grants, and robotics is an important part of our effort,” says Benoit Dawant, the Cornelius Vanderbilt Professor of Engineering. Dawant directs the Vanderbilt Initiative in Surgery and Engineering (ViSE), established in 2011.

Sensory Awareness

One of the newest projects, titled Complementary Situational Awareness for Human–Robot Partnerships, received a five-year, $3.6 million grant in October as part of the National Robotics Initiative. The project was the idea of Nabil Simaan, associate professor of mechanical engineering and otolaryngology, who assembled an interdisciplinary team at Vanderbilt that will work closely with similar teams at Carnegie Mellon and Johns Hopkins universities.

“Our goal is to establish a new concept called ‘complementary situational awareness,’” says Simaan. “Complementary situational awareness refers to the robot’s ability to gather sensory information as it works and to use this information to guide its actions.”

Surgeons performing minimally invasive surgery operate through small openings in the skin. As a result, they can’t directly see or feel the tissue on which they are operating, as they do in open surgery. Surgeons have attempted to compensate for the loss of direct sensory feedback through preoperative imaging, in which techniques like MRI, X-ray and ultrasound are used to map the internal structure of the body before they operate. They have developed miniaturized lights and cameras to provide them with visual images of the areas where they are operating, and methods have been developed to track the position of the probe as they operate and plot its position on the preoperative maps.

Now, Simaan and his collaborators intend to take these efforts to the next level by creating a system that acquires data from numerous types of sensors as an operation is underway. This data will then be integrated with the preoperative information to produce dynamic, real-time maps that track the position of the robot probe precisely and show how the tissue in its vicinity responds to its movements.

“In the past we have used robots to augment specific manipulative skills,” says Simaan. “This project will be a major change because the robots will become partners not only in manipulation but in sensory information gathering and interpretation, creation of a sense of robot awareness, and in using this robot awareness to complement the user’s own awareness of the task and the environment.”

On a somewhat smaller scale, Pietro Valdastri, assistant professor of mechanical engineering and medicine, concentrates on capsule robots. So far in his career, he has experimented with a jellyfish capsule that propels itself with tiny fins, a centipede capsule with 12 legs, a capsule with tiny propellers, and a capsule that uses a cellphone vibrator for locomotion.

Capsule robots could help lower colon-cancer mortality rates by making routine screenings more palatable to patients.

Currently, Valdastri is concentrating on the design he originated at Scuola Superiore Sant’Anna in Italy before moving to Vanderbilt. He thinks a magnetic air capsule (MAC) has the best chance for replacing the infamous colonoscopy.

The MAC is equipped with all the capabilities of a traditional colonoscope—camera, LED lights, a channel for inserting tools needed to remove polyps, and so on—and is the size of the smallest colonoscope. Instead of being pushed through the colon, however, the capsule is pulled by a powerful magnet outside the body, controlled by a robot arm. In addition to the thin wires connecting the camera and lights, the capsule is hooked up to a small air tube that provides an air cushion to allow it to glide smoothly. The air also can be used to inflate the colon in case the capsule gets stuck or to uncover portions of the lining hidden from view. A sensor on the capsule detects the strength and direction of the magnetic field, which allows the operator to track its location and orientation accurately.

Valdastri, working with his laboratory team and with Dr. Keith Obstein, assistant professor of medicine and mechanical engineering, has developed an improved version they hope to bring to clinical trials in the next three to four years.

Each year colon cancer claims more than 600,000 lives worldwide, despite the fact it can be treated with a 90 percent success rate when caught early enough. “We hope to increase the number of people who undergo routine screenings for colon cancer by decreasing the pain associated with the procedure,” says Valdastri. “We also hope to improve its effectiveness.”

Intracranial hemorrhaging is another deadly illness whose treatment could be transformed by robotic surgery. Last year Robert Webster, assistant professor of mechanical engineering and neurological surgery, attended a conference in Italy where one of the speakers ran through his wish list of useful surgical devices. When the speaker described the system he would like to have to remove brain clots, Webster couldn’t help breaking out in a big smile: He had been developing just such a system for the previous four years.

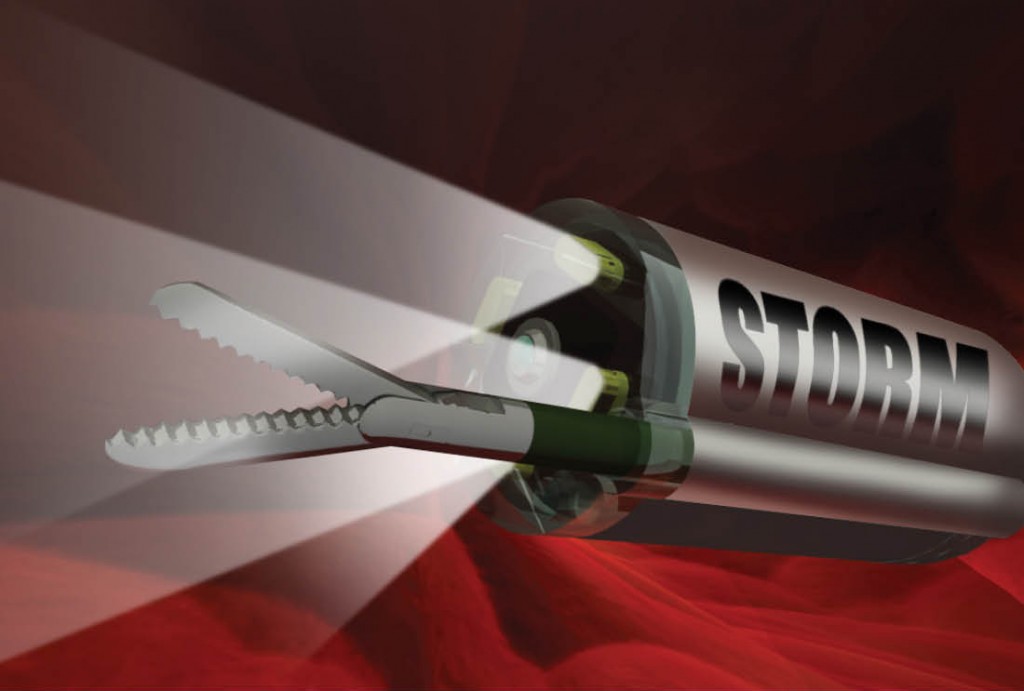

Webster’s design, which he calls “active cannula,” consists of a series of thin, nested tubes. Each tube has a different intrinsic curvature. By precisely rotating and extending these tubes, an operator can steer the tip in different directions, allowing it to follow a curving path through the body. The single-needle system required for removing brain clots is much simpler than the multi-needle system Webster had been developing for transnasal surgery. When he told his collaborator, Dr. Kyle Weaver, about the new application, Weaver was enthusiastic.

“I think this can save a lot more lives,” says Weaver, assistant professor of neurological surgery and otolaryngology. “There are a tremendous number of intracranial hemorrhages, and the number is certain to increase as the population ages.”

Smart Prosthetics

As a teenager Michael Goldfarb, the H. Fort Flowers Professor of Mechanical Engineering, got a summer job in the prosthetics department of the local Veterans Affairs hospital. The experience hooked him on the challenge and reward of designing advanced prosthetic devices that are substantially smarter, more capable, more active and more interactive than those currently available.

Craig Hutto, right, lost his leg in a shark attack on a Florida beach eight years ago. For the past few years, he has been working with the lab of Professor Michael Goldfarb, left, to test the first lower-leg prosthesis with powered knee and ankle joints. Hutto received his master of science degree in nursing from Vanderbilt in May. (John Russell)

His first success was developing a truly “bionic” arm for the Defense Advanced Research Projects Agency. He concluded that existing electromechanical technology was inadequate to provide the prosthesis with enough energy to match a natural arm’s use, so he designed a mini-rocket motor to power it. The pen-sized device burned the same monopropellant used in space-shuttle thrusters and produced small amounts of water when it burned.

Although the arm met or exceeded all the project’s specifications, its radical design meant that getting it approved for general use would be extremely difficult. Therefore, for his next project, a lower-leg prosthesis, Goldfarb returned to batteries and motors. His design was the first to combine powered knee and ankle joints. It is equipped with sensors that detect patterns of physical interactions with the user and employ these patterns to move the joints in synchrony with the user as he or she walks, runs, and ascends or descends stairs.

“When it’s working, it’s totally different from my current prosthetic,” says Craig Hutto, MSN’13, the 25-year-old amputee who has been testing the leg for several years. “My normal leg is always a step behind me, but the Vanderbilt leg is only a split-second behind.”

A commercial version of the artificial leg is being developed by Freedom Innovations, a leading developer and manufacturer of lower-limb prosthetic devices. In addition, the Rehabilitation Institute of Chicago has developed a neural link that has made it the world’s first thought-controlled bionic leg.

Goldfarb’s research team also has produced an artificial hand that can shift quickly between different grips and a powered exoskeleton (called the Indego) that not only allows people with paraplegia to stand and walk but is also compact enough to wear in a wheelchair. These designs, too, have been licensed by companies interested in producing and selling commercial versions. The exoskeleton in particular—with its powerful, battery-operated electric motors that drive hip and knee joints—is getting a lot of attention. When Popular Mechanics magazine announced its Breakthrough Awards recently, it named Goldfarb and his former graduate student Ryan Farris, MS’09, PhD’12, among “10 Innovators Who Changed the World in 2013.”

Jason Koger lost both his arms below the elbow when he came into contact with downed power lines while riding his ATV. He now uses prosthetic arms and hands developed at Vanderbilt—just one of many collaborations between the School of Engineering and School of Medicine. (Daniel Dubois)

While robots promise to provide new mobility to amputees and paraplegics, they also may be of assistance to the public health dilemma being caused by the rising number of children diagnosed with autism spectrum disorder. ASD has jumped by 78 percent in just four years: Today, one in 88 children (one in 54 boys) is diagnosed with ASD. The trend has major implications for the nation’s health care budget because estimates of the lifetime cost of treating patients with ASD range from four to six times greater than for patients without the disorder.

In collaboration with Zachary Warren and Julie Crittendon at the Treatment and Research Institute for Autism Spectrum Disorders at the Vanderbilt Kennedy Center, Nilanjan Sarkar has demonstrated that the special fascination with robots by children with ASD can be used to help treat their condition. The team has designed an interactive system that uses a 2-foot-high android robot as its “front man.” Their research has shown that the robot can be nearly as effective as a human therapist in training children 2 to 5 years old who have been diagnosed with ASD to develop the fundamental social skills they need but have difficulty mastering.

“A therapist does many things robots can’t do,” says Sarkar, a professor of mechanical engineering and computer engineering. “But a robot-centered system could provide much of the repeated practice that is essential to learning.”

First Responders

In contrast to robots’ novel role as a teacher’s assistant, their use as first responders in emergency situations has become firmly established during the past decade. The first documented use of rescue robots was during the aftermath of 9/11. Researchers from Texas A&M’s Center for Robot-Assisted Search and Rescue (CRASAR) brought several shoebox-sized robots to New York City to help locate victims trapped in the wreckage of the twin towers. They proved their usefulness and have since been part of rescue efforts at the New Zealand Pike River Mine disaster and the Deepwater Horizon oil spill in 2010, as well as the Tohoku earthquake and tsunami and the Fukushima Daiichi nuclear disaster in 2011, among others.

“What we are seeing is a lot of fascinating robot platforms being developed and lots of sensors,” says Robin Murphy, director of CRASAR. “But the trick is putting all this information together in a way that regular people trying to make important decisions can use it—be it the American Red Cross getting an estimate on how many refugees they should plan for, law-enforcement officers who need to know the status of a particular area, or a homeowner who’s trying to determine if he’ll be out of the house and out of work for days or weeks. [The challenge is getting] the right information to the right people at all levels.”

These are precisely the problems that Julie Adams, associate professor of computer science and computer engineering, is addressing in her Human–Machine Learning Lab. “We are looking at human–robot interaction in realistic environments, including emergency response,” she says.

The relevant acronym is CBRNE: chemical, biological, radiological, nuclear and explosive. Current procedures for dealing with CBRNE incidents rely heavily on face-to-face and radio communication with very limited use of computers or robotics. As the use of rescue robots and unmanned autonomous vehicles grows, however, so too will the importance of computer-based communications and information processing.

In anticipation of this trend, Adams and her students are developing software designed to make sense of the large amounts of information generated during CBRNE incidents from a variety of sources, both human and machine. The software automatically evaluates the relevance of various pieces of information for different types of decision-makers and then displays the information in a graphic format that emphasizes the most relevant data.

For example, paramedical teams are primarily interested in locations where casualties have been found, while members of a bomb squad are interested in the locations where bombs have been located. Accordingly, the software highlights casualty reports on the paramedics’ screens while reports of bomb locations are highlighted on the bomb-squad displays.

Over at Vanderbilt’s Institute for Space and Defense Electronics, Arthur Witulski, a research associate professor of electrical engineering, heads a multidisciplinary team that has begun studying the effects of high levels of radiation on robotic first responders for the Defense Threat Reduction Agency.

“The use of robots following the Fukushima Daiichi nuclear power plant disaster gave us the idea,” Witulski says. “Very little is known about performance degradation due to the high levels of radiation on robots like those the Japanese were using. What little testing that has been done has been testing to failure. The question we are asking is, Do radiation-induced changes occur before failure that may reduce a robot’s awareness of its environment or its ability to do meaningful work?”

Although the study is at an early stage, the radiation experts have evidence that the answer to their question is yes. They have found that some range-finding sensors used on robots become unpredictable for a period before they fail. They also have discovered the microprocessors that serve as the robots’ brains begin to degrade before they stop working altogether. Monitoring microprocessor performance, therefore, may provide a built-in health test, says Witulski.

Materials Handling and Logistics

It’s quite a leap from the chaotic environment of emergency response to the orderly world of the warehouse, but here too robots are making inroads. Systems created by the Nashville high-tech startup Universal Robotics are providing a new level of capability and flexibility to this mega-industry.

The company is the direct result of an inspiration that Richard Alan Peters, associate professor of electrical engineering, had while working with NASA on its Robonaut program. The task: Imitate how the brain learns by giving a robot an “egosphere”—a geodesic structure that organizes the sensory input the robot receives by time and spatial direction—and a “neocortex” that analyzes the sensory signals the robot receives and the movements it makes while performing different tasks. Connecting these patterns based on similarity allows the robot to adjust its actions to alterations in its environment, such as differences in the size, orientation and position of the objects it is instructed to identify or changes in lighting—things that ordinary robots must be reprogrammed to handle.

At the time, Peters’ brother David was working in the entertainment industry in Hollywood, and Peters saw this as a golden opportunity for them to start their own business. They convinced Vanderbilt to apply for a patent on the idea, and it became the first patent issued on the architecture of robot intelligence. They formed the company, obtained an exclusive license on the patent—which became the basis for their flagship software product, Neocortex—and set up shop in a garage on property near Nashville International Airport. David reviewed a number of different industries and determined that materials handling and logistics held the greatest promise because of pent-up demand for the ability to automate handling of random objects in a changeable environment.

The brothers started out modestly with a three-dimensional vision system based on off-the-shelf equipment—Kinect motion sensors and Web video cameras—and created a vision-inspection system called Spatial Vision. One of its first uses was by a company that prints blank checks. The system verifies that the name, address, bank code and images are correct and in the proper location. The system is also being used by the Australian company CHEP, the leading provider of shipping pallets in the world, to scan its pallets in 3-D to identify defects in order to reduce scrap and increase the overall quality of its product.

Once they had developed the vision system, the Peters brothers’ Universal Robotics team combined it with a modular Neocortex platform to produce a 3-D vision guidance system, which is being used by several Fortune 200 companies to automatically seal shipping boxes that come in a variety of shapes and sizes, including those the system has never seen before.

“What is special about our system is that it learns from experience. The more you throw at it, the better it gets,” says Universal Vice President Hob Wubbena.

A fascinating example of the system’s adaptability comes from the perfume trade. Perfume vials come in a bewildering variety of shapes and sizes, which makes them very difficult for a robot to handle. But the Neocortex system can learn to recognize and handle even the most exotically shaped bottles.

As these projects suggest, robots are moving beyond the role of extremely intelligent tools to something closer to partners—and Vanderbilt scientists, engineers and physicians are helping lead the way in the development of this unprecedented evolution.